Click to explore a planning phase, or section of our sample data system specification.

Introduction

Building a new data system can be a complex and multifaceted activity. A structured approach and clear specifications make it easier to understand the variety of needs and considerations that must be addressed to create a successful solution.

Working through the process with your team helps build greater commitment for the project and ultimately satisfaction with the result.

This simple tool walks you through the basics of developing a system, and provides the elements of a specification that you can use to jumpstart your development effort.

Ready to build your new data system?

Development Process

Implementing a new data system is a complex undertaking. Achieving a good solution requires gaining a solid understanding of your users and their needs; communicating your requirements clearly and accurately; implementing a solution that is consistent, reliable and easy to use; and providing appropriate training and support. To help you get the best result from your data system implementation effort, we have outlined below, a proven implementation method. Each of the 6 phases contribute to the end result.

Phase I–Discovery

During the discovery phase, you will gather information needed to build or procure a system that fits functional, reporting and operational needs. As systems are now much more complex than ever before, and because there are often a wide range of stakeholders, the effort will take some time. Your goal through this part of the process is to understand which capabilities the system will need, and the conditions/environment in which it will be operating. Here are some steps for planning a successful discovery phase.

Identify Your Stakeholders

Determine who will use, maintain, manage or have responsibility for any aspect of the system. These are you key stakeholders. Your ability to meet their needs will heavily influence the success of the new data system. The following is a list of some possible stakeholders. Consider individuals in all roles who will operate the system or use any of its reports – or other outputs.

Program Administrators, Teachers, and Data-entry Staff

Program administration and staff understand daily operational and reporting needs. Because they are responsible for hands-on data entry and generating reports as an integral part of their jobs, their insights are essential for determining the size and shape of the system and its functionality. Their acceptance of the system helps assure its success.

State Adult Education Staff

State staff are responsible for accountability, administration, federal and state reporting, and policies/practices that affect adult education statewide. You provide vital input on which data to manage, and the business rules that affect how student information is tracked, operating requirements for programs and reporting features.

Department of Labor Partners Under WIOA

Staff at the state's labor agency have specific requirements and reporting needs applicable to their mission, and relevant to adult education. They may have differing needs and data management requirements for student records, demographics, outcomes and timelines that need to be addressed or accommodated within the state data system.

Agency IT Managers

Adult education data systems, now more than ever, operate within an ecosystem of agency applications, and under significant security, privacy and efficiency-related constraints. Agency IT managers are well versed in the range of technical considerations and specific requirements for implementing systems whether they operate within the agency or interact with others.

Legislative Staff Members

Federal and state legislators often make decisions about the direction and funding of adult education efforts. While they do not normally use data systems, they may receive accountability and other reports that influence their decisions. A greater understanding of their needs and concerns may improve the kinds of reports produced, and even data managed, by the system.

Learn About Your Stakeholders

To understand which functions and features are appropriate to include in your data system, consider the key characteristics of your stakeholders. Specifically, what are their responsibilities? What information do they need? What kinds of tasks to they do every day?

The best way to learn about your stakeholders is to spend some time with them. Ask questions, and try to understand the challenges of their jobs. What problems might the new data system help them solve? Some stakeholders may know exactly what to tell you about their needs and concerns regarding a new data system. Others may need a little guidance. The discovery process functions as a conversation to uncover the needs of system users. Get started by asking stakeholders questions, such as:

- What work do you do related to adult education?

What are your overall or daily responsibilities? Activities? - What kinds of adult education information do you use or need?

(e.g., Student Records, Outcomes, Class Management, etc) - How do you use the current data system?

(Get information for decision-making, record keeping, etc.) - What do you like or dislike about the current system?

- What are the greatest challenges you face related to data in your work?

- Are there particular operational or data management standards, regulations or constraints with which the system would need to comply?

(student identifiers, security, privacy, access, etc) - With whom might you need to share data or reports from the system?

- Are their others we should ask about their adult education data use needs?

Hidden Stakeholders

In gathering information about the system and the needs it must address, be sure to consider your hidden stakeholders. These individuals may not be actual users, but they have an interest in how the system operates or how its information is used. Discussing technology needs with IT managers, reporting needs with legislative staffs, and data sharing needs with partner agencies, will help you get a better idea about your system's operating constraints, and requirements that might otherwise have gone unnoticed.

Phase II–Requirements Definition

Requirements Definition is all about documenting what was learned in a way that enables system developers or vendors to respond to your needs. During requirements definition, you will create a specification that describes a system's purpose, users and how they will use the system, as well as specific inputs, outputs and functionality. These aspects of a system characterize the elements upon which it is built.

There are different approaches to defining data system requirements and system elements. Your approach will depend upon who will read and act on the requirements, how they will use them, and the level of detail required. To see a specification covering the basics, check out [link to New Hampshire sample].

Below, we identify a few ways that you can capture and document a system's inputs, outputs and functionality for sharing with a vendor or developer.

Use Cases and Functionality

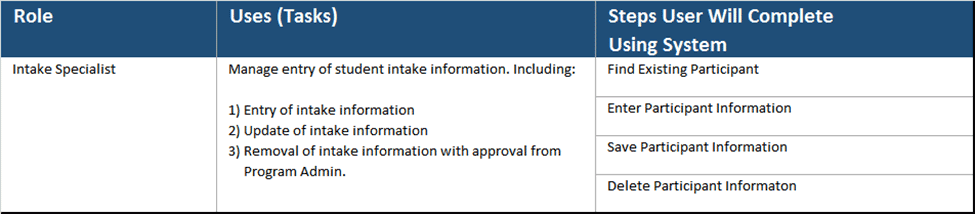

Features that a system must provide are often described by documenting specific use cases, which outline the process an individual in a particular role might use to accomplish a task. Here is a sample:

A collection of use cases may include many rows, each describing a different task that users might need to complete. The role column specifies who might use the feature to complete a specific task. The uses column lists a collection of tasks, or uses, for which an individual in that role might access the system. The steps column describes the steps a user would take to complete each task.

Data Dictionary and Inputs

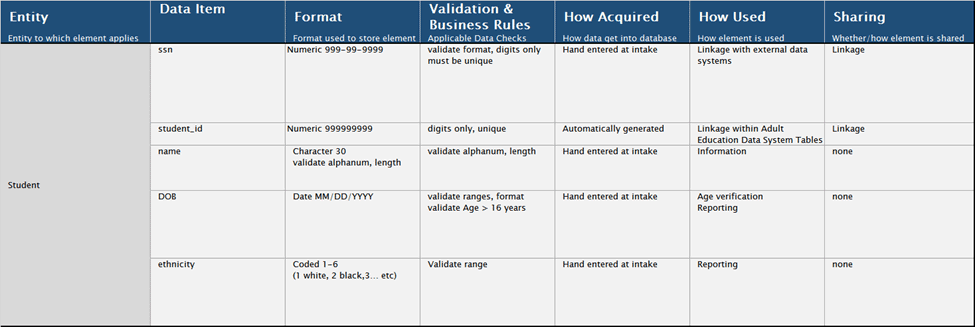

A data dictionary may be created to document the kinds of information used by the system, their formats, information for data entry error checking, and even business rules. Such a detailed listing can take lots of effort, and collaboration to create.

Data dictionaries often have hundreds of entries or more. For states building a data system, particularly as part of an interagency effort, the data dictionary provides common definitions often discussed or negotiated across agency lines. For those procuring an off-the-shelf system, a simpler listing of data fields needed might suffice.

This excerpt from a data dictionary includes the following information:

- Entity – A person or thing about which information is stored. This entry lists information that they system will store for individual students.

- Data item – Particular characteristics or pieces of information related to the entity. In the example above, there are listings for student social security number, student id number, name, date of birth and ethnicity.

- Format – A technical description showing how the data item will be represented. For example, student name can be a string of, at most, 30 characters.

- Business rules – A description of any business rules that apply to a data item. For example, users cannot enter a student date of birth in the future, or for an individual younger than 16 years old.

- How acquired – Indication as to how the information is acquired. Much of the time, it may be hand-entered. But it is possible that data are transferred from another system or even calculated.

- How used – A brief description about how the data item will be used.

- Sharing – An indication as to whether, with whom and how a data item will be shared.

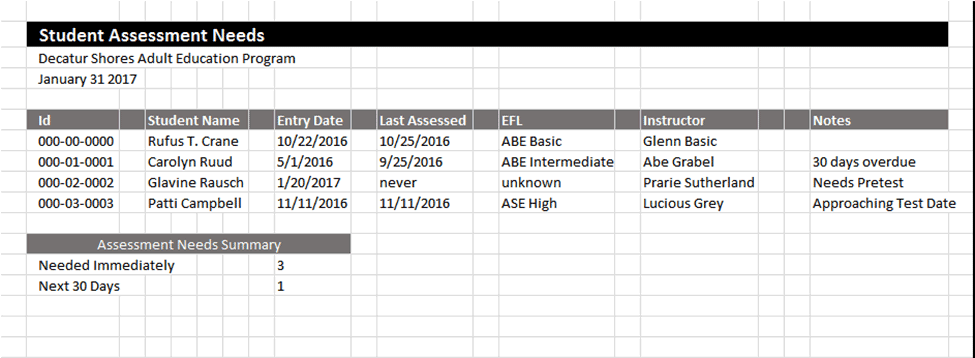

Reports and Outputs

Outputs, often in the form of reports, provide much of the value of a data system. They deliver insights about student outcomes, provide tools for instructors, and provide information for NRS accountability. As part of a requirements document, you might list the reports needed (with a brief description) or provide a prototype if layout and formatting is of importance.

If working with developers to create the reports, a prototype provides detailed information and helps assure that the right information is included, in a format that is most useful to your users.

In contrast, if procuring the system from a vendor, a simple list might suffice – as you will likely have less influence over how the reports are generated or their format.

Whatever methods you use to describe the system, be sure to include an overview of its purpose and users. This helps provide context for a developer or vendor, allowing them to better understand your needs and offer a fitting solution.

Specifications, as mentioned above, should also provide some description of the system's functionality, inputs and outputs. In addition to documenting your needs, the process of creating a specification is valuable for thinking them through, while helping your staff and partner agencies develop a common vision for the system.

Phase III–Evaluate and Implement

After you have shared your system requirements with vendors or developers, they will get back to you with their ideas as to how your system can be built. If the system is being created from scratch, then their response may be more detailed and include technical information. If a vendor proposes an off-the-shelf solution, then you might receive general information, including a list of system functions, reports and special features – without much detail as to how they work. A system customized using an off-the-shelf base will likely describe how they system you receive may differ from the base version.

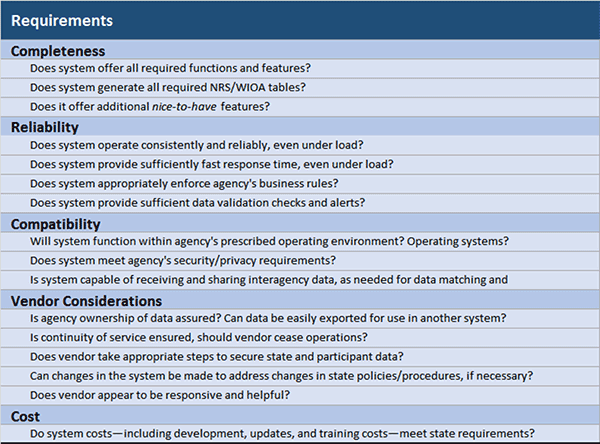

To assure that a proposed system meets your needs, compare its features and functions with those described in the requirements document created during phase II. But don't stop there. Basic functionality is one in a number of considerations that determine whether the system is suitable. For example, if a proposed system runs reliably and generates accurate reports, then it is more likely to be successful than one that does not. A system that is easy to use, and provides features to assist individuals entering data or running reports is more likely to be embraced by users and operated more effectively than a one that is cumbersome to use.

To help you assess the merits of systems you are considering, review the factors that commonly affect a system's success. Ask whether proposed solutions meet these criteria. If they do not, you are more likely to run into difficulties.

Factors such as cost and advertised features are relatively easy to assess based upon a vendor proposal. You can get an idea about the system's reliability by taking a test drive or asking existing customers. Having run the system in a production environment, they are likely to know how it will operate under load and how well it deals with unusual circumstances. Does end of the year processing work well? How about special cases, like returning students? Are NRS Tables generated properly? Ask your IT department to assess the system for compatibility with the agency's infrastructure, security, privacy and similar considerations.

Phase IV–Quality Assurance

New systems are usually equipped with a sheen of excitement and optimism when first launched. Who wouldn't want spectacularly insightful reports, gleaming pulldown menus, and ever-ready dashboards packed with insightful information? Unfortunately, the implementation of a new system usually comes with a few glitches along the way, which can temper the optimism with a dash of concern. To assure that a newly implemented system operates properly, and meets your expectations, you should conduct a formal testing activity, and may also want to conduct a usability test.

Testing System Quality

With many functions, business rules, data checks and an array of reports, dashboards and data-sharing features, modern data systems are difficult to test in a comprehensive way. To help verify that its core capabilities are functioning, and to test a wide range of user styles and special cases, design a multifaceted testing process.

Start by creating a test plan that prescribes specific test scenarios. These scenarios can be used by internal testers to verify that core functions work properly – to catch the most common and most significant error conditions.

Below are a few scenarios you might use in testing an NRS system. You can build on these samples to create your own, more comprehensive, collection of scenarios:

- Create a student intake record, and enter identifying and demographic information for a student who has never before visited an adult education center. Before entering complete and accurate data, leave all fields except name blank, then enter obviously erroneous dates of birth, ethnicity codes, address and phone information.

- Enter a pretest score for the student, using TABE as an assessment. Back out the score, and re-enter it.

- Enroll a student in an ABE class, consistent with the assessment score you entered.

- Enter contact hours for the student's first month in class. Try entering too many hours, and negative hours.

Assemble a group of testers to work through the scenarios, and report back issues that arise. You might create a reporting form or use on online bug tracker that enables testers to document issues that they find.

After the bugs identified by your testing team have be addressed, consider broadening your team of testers or opening the test to a group of your most dedicated data system users. These beta testers might use the protocol as a starting point, but should also conduct a more open-ended series of tests based upon their job roles. By opening the test process broader and broader groups prior to system launch, you can test a much wider range of scenarios, and identify more issues, likely to come up during real-world operation.

As testers identify issues, developers can fix them. When the number of new issues drops to a minimum, the quality assurance process is complete.

Usability Testing

If you are working with a developer to create your new system, and have input into its navigational structure and design, you can consider conducting a usability test. Usability testing enables you to identify issues that individuals might encounter when operating the system. Usability testing helps you refine the operations of a system saving users time, boosting productivity and preventing user fatigue. An optimized user interface also helps prevent entry errors that lead to data-quality issues.

To run a usability test, you will create a set of scenarios that require the use of frequently accessed or labor-intensive functions. You may even be able to use some of the same scenarios used in your system quality test, described above. Recruit testers to work through the scenarios while you watch. Make note of particular sticking points. Have them talk through their progress, and thought process, as they work through the scenario. Share your insights with developers who can help you simplify operational flow, reduce confusion, or improve the user experience. For tips on effective usability testing, download a copy of our usability guide.

Phase V–Pre-Launch

During the Pre-Launch phase, you will prepare your new system for use. Particular items on a pre-launch to-do list might include: Data Conversion, System Configuration and Training.

Data Conversion

If you have student records data in an existing database, then you will need to move it for use with the new system. While at first this might appear to be a simple effort, differences in data item and table formats can introduce significant complexity into the process. Developers tasked with implementing the conversion will need clear directions as to how existing data are to be formatted and transformed. As some data formerly maintained in your NRS data system database could possibly be shared or even stored in a partner agency's database, collaboration between vendors may also be necessary.

If the data dictionary you created during phase II is complete and accurate, then much of the information for performing your data conversion will be available. By identifying data conversion needs early in the process, and involving your IT department, you can better plan for this activity.

System Configuration

During the Pre-launch phase, you will also need to set up user accounts and enter program-specific information and other operational basics, such as classes, instructors, and assessments. Consider making a checklist of set up items, and determine who will enter the data. If programs are responsible for managing their own information, a portion of the training might be dedicated to helping them setup the new system for their program's use.

Training

Although your system may be intuitive and easy to operate, many individuals will need some training to get started using it. Depending upon the number of users, logistics, and available resources, you might choose to schedule an in-person training, webinar, self-guided tour or other type of activity to help users become familiar with the system's features and procedures for entering data. The training program should be consistent with state requirements and business rules.

Phase VI–Support

Once your users are actively working with the system, issues will likely arise. Users will have questions as to how to handle particular situations, for example students enrolled in multiple programs or those returning after an extended absence. Upfront planning to support your users will help them make the most of your new data system, and enable them to enter data that are both accurate and up-to-date.

There are numerous ways to provide support, and you may want to implement a tiered method to help address the range of basic operational questions, software issues and policy-related quandaries quickly and appropriately.

Basic User Operations

To address basic operating questions, written documentation and context-sensitive help are great tools. Providing terse and to-the-point solutions for users trying to enter attendance hours or assessment scores is easy, and can prove to be a great time-saver for your users especially when the information is easily accessible and easy to use. For beginning users, consider creating some short how-to videos or screencasts.

Technical and Policy Questions

To address more challenging issues, such as software bugs, you might designate a staff member to receive and respond to queries. E-mail, text messages, or even phone support provides a quick and immediate channel for users to request the help they need. It is possible that a vendor would provide this function. Consider in advance the kinds of assistance that will be needed, and prepare by assembling a collection of contacts to address technical questions, system failures and policy-related questions.

System Issues and Improvements

To understand and address ongoing challenges, like improving system features and performance, consider forming a user group. Members might meet quarterly, or even monthly, to discuss user needs, new reports, upgrades and other aspects of the system that affect all users. Feedback from a user group can inform system upgrades, and help you identify training needs and policy issues to address.

Your Next Steps

Each stage of the process to implement a new data system comes with particular tasks and challenges. The steps outlined in this guide are designed to help you understand what it takes to gather your thoughts, conceptualize what needs to be built, implement a reliable solution and provide for your stakeholders. However, system implementation is a complex activity that requires the expertise of professionals with a variety of skill sets. With these basics of a robust process in-hand, you have a road map for guiding your team to a successful data system implementation.

Specification Sample

The elements of a specification for developing a data system depends upon several factors, including:

- Whether you plan to implement a custom-built or off the shelf option

- Whether development will be performed in-house or by a vendor

- Level of expertise of the development team.

The art and science of crafting a specification includes thoughtful consideration of the elements of the system, characteristics of the system to be communicated, and who will use specifications and for what purpose.

The sample specification included as part of this tool provides basic information that you might include in a vendor RFP. Refer to the sidebar links to explore its elements. A more detailed version might provide information for system designers or developers. Where more details are required, additional time will be required to prepare a specification.

Because NRS data systems often operate in an interagency context, collaboration between adult education, workforce, system development, information technology and other professionals may be required to provide information on agency-wide IT requirements, consistent data definitions, security and other key factors.

Regardless of the intended audience, specifications generally include a brief overview of the system's purpose, information about the system's key users and their roles, system outputs, functions, inputs and any operating constraints. Our sample specification follows.

Users and Roles

We anticipate that the system will be operated by staff members in the state office, and by local program directors, administrative assistants and instructors.

Each program will have its own set of student records and reporting capabilities – separate and apart from the records of other programs. State staff will be able to review local student records, monitor the process of local data entry and generate both individual program and aggregate statewide reports.

The following section outlines basic functions that will be required by system users:

State Staff

| System Configuration | Setup parameters, operational rules and data for assessment, intake and follow-up activities, including allowable tests, assessment timelines, and information required for follow-up activities. |

| Program Setup | State staff will have an ability to setup student records management capabilities for individual programs, and provide access for local program staff. |

| Accountability Reporting | State staff will have the ability to generate all NRS and WIOA tables, plus reports to monitor local program activities including enrollment, advancement, achievement, separation and follow-up for students. |

| Decision Support | State staff will have available tools for understanding demographic, achievement, and operational efficiency both at the program and state level. Ideally, data exploration, dashboards and business intelligence tools will provide the flexibility and insight needed to support program improvement activities. |

| Data Matching and Sharing | State staff will be able to access WIOA-relevant data from other agencies needed to generate accountability reports, and provide support for local program operations. |

Local Program Directors/Staff

| Monitor Operations | Program Directors and their staff will have an ability to review the status of key operational activities including enrollment, attendance, assessment, advancement, and follow-up tracking. |

| Decision Support | Program Directors and staff will utilize dashboards, and data analysis tools for reviewing progress toward particular outcome-related goals, and underlying factors that might affect progress toward them. |

Program Administrators

| Teacher Information Management | Program administrators will enter basic contact information about teachers serving students in the program. |

| Class Setup | Program administrators will enter information about managed enrollment and unstructured classes into which students are enrolled. |

| Management Reports | Program administrators will run management reports on behalf of Program Directors and staff. |

| Follow-up | Program administrators will use the system to determine when to follow-up with students, and record achievements over time. |

Instructors

| Student Management | Instructors will use the system to obtain student profile information. |

| Attendance | Instructors will use the system to record contact hours for their students. |

| Assessment Readiness | Instructors will use the system to obtain reports on students needing post-testing. |

| Assessment Completion | Instructors will use the system to record the results of student pre and post tests. |

Outputs

The system will generate reports to support state compliance with NRS requirements under WIOA. In addition, it will provide reports and tools to support program operations and state planning. See the NRS TA Guide. http://nrsweb.org/policy-data/nrs-ta-guide to download the layouts of each NRS Table.

Additional reports for program management include:

- Student Profile Report

- Students Needing Posttests

- Class Contact List

- Inactive Students Report

- Student Attendance Report

- Students Needing Follow-up Report

System Functions

To meet the needs of its users, as described above, the system will offer the following tools. Each tool will provide specific functions for managing student records, generating reports and alerts, preparing accountability reports, setting-up the system and so on.

Student Records Manager

The Student records manager provides a method for entering basic information about individuals who enter an adult education program. It consists of a single data entry screen to capture: Student name, SSN, DOB, ethnicity, gender, address, home phone, mobile phone, and email address. As specified in the data dictionary, and business rules sections of this specification, data entry validation checks will prevent entry of erroneous and improperly formatted data. It will provide the following functions:

| Student Lookup | Ability to search for students by name, student id, email address or phone number. |

| New Student Entry | Ability to enter information for a new student, after checking that the student is not already entered into the system. Each program will have its own set of student records, but the system will attempt to populate new student records with existing contact and demographic information if individual is found in another program's records. |

| Update Student Entry | Ability to make changes to a student's contact or demographic information consistent with the data dictionary, and business rules outlined in later in this document. |

| Separate Student | Ability to mark the student as separated from the program. It provides for required entry of a separation date and reason for separation, consistent with rules specified in the business rules and data dictionary sections of this document. Once separated, students no longer appear in participant or class lists. |

Assessment Manager

The Assessment manager provides functions for entering and updating student assessment scores. It consists of an entry screen that lists all pretest and posttest scores for a student during their current period of participation, and a selection screen from which instructors or program administrators can view a list of students in their program (or class), and select one for updating.

The listing of students includes student name, posttest eligibility date, date of last assessment, latest test score and EFL. It can be arranged by name, posttest eligibility date or date of most recent assessment.

The entry page includes past assessment scores during the current period of participation, for read-only access. For each assessment, date, score and instrument used must be entered. Educational Functioning Level (EFL) is generated automatically. Entries are validated consistent with formats and ranges specified in the data dictionary, and according to the rules specified in the business rules section of this document.

Functions:

| Select Student | Ability to search and/or select student from class or program list of students. |

| Add Test Score | Function to add information about a pre/post test, including date and score. System should provide checks to prevent data entry or business rule issues. EFL should be calculated automatically. |

| Update Test Score | Function to update information about a test score, consistent with data validation and business rules, with the intent of correcting erroneous entries. Supervisory approval should be required to update. |

| Remove Test Score | Remove test score that was entered erroneously. Supervisory approval should be required to remove. |

Attendance Manager

The Attendance manager provides functions for entering and updating student attendance hours. It consists of an entry screen that lists a student's attendance history during their current period of participation, and a screen from which instructors or program administrators can view a list of students in their class, and select one for updating. The listing of students includes the student name, identification number, enrollment date, and last date for which attendance hours were entered.

The entry page includes past attendance hour entries during the current period of participation, for read only access. For each entry, the number of contact hours, and period to which they apply, must be entered. A comment may be added to an entry. Entries are validated consistent with formats and ranges specified in the data dictionary, and according to the rules specified in the business rules section of this document. Once entered, entries may not be deleted. However, adjusting entries is allowed, and a note must be included explaining why it was necessary.

It will provide the following functions:

| Add Contact Hours | Function to allow entry of contact hours and dates to which they apply. |

Outcomes Manager

The Outcomes manager provides functions for entering and updating student outcomes for employment, and educational outcomes. It consists of an entry screen that lists any student outcomes for the current period of participation, and a screen from which instructors or program administrators can view a list of students in their program or class, and select one for updating.

The listing of students includes the student name, identification number, enrollment date, date of latest outcome, and brief description of the outcome.

The entry page includes a student's outcomes for the current period of participation, for read only access. For each entry, the number of contact hours, and period to which they apply, must be entered. Notes pertaining to required follow-up may be added to an entry. As NRS under WIOA defines certain reportable outcomes, the system will provide a dropdown list from which an outcome may be selected.

The Outcomes manager should also accept information about student outcomes from a data-match file. The file should contain rows for individual student outcomes, and columns that apply to the outcome. Specifically, each row should include the student identification number for matching, outcome type, date of outcome and supporting documentation/notes.

Entries are validated consistent with formats and ranges specified in the data dictionary, and according to the rules specified in the business rules section of this document.

Functions:

| Select Student | Ability to search and/or student from class or program list of students. |

| Add Outcome | Function to add information about an outcome, including date and type of outcome, and notes/supporting documentation. The system should provide checks to prevent data entry or business rule issues. |

| Update Outcome | Function to update information about an outcome, consistent with data validation and business rules, with the intent of correcting erroneous entries. Supervisory approval should be required to update. |

| Remove Outcome | Remove an outcome that was entered erroneously. Supervisory approval should be required to remove. |

| Data Match | Function to import a data match file. A user enters the data match file name, then clicks submit. System must have a mechanism in place to validate the match information and save in the student records database. |

Follow-up Manager

The Follow-up manager provides functions for entering and updating follow-up information related to student outcomes. It consists of an entry screen that lists any student outcomes for the current period of participation, and a screen from which instructors or program administrators can view a list of students in their program or class, and select one for entering follow-up information. Follow-up information can also be imported via data match.

The selection list of students includes the student name, identification number, enrollment date, date of latest outcome, brief description of the outcome, and date of latest follow-up and whether they were hand entered or data matched.

Functions:

| Select Student | Ability to search and/or select student from class or program list of students. |

| Add Follow-up | Function to add follow-up information for an existing outcome, including date, follow-up requirement being met (gained employment, retained employment, credential earned, etc), and notes/supporting documentation. The system should provide checks to prevent data entry or business rule issues. |

| Update follow-up | Function to update information about a follow-up, consistent with data validation and business rules, with the intent of correcting erroneous entries. Supervisory approval should be required to update. |

| Remove Outcome | Remove record of follow-up that was entered erroneously. Supervisory approval should be required to remove. |

| Data Match | Function to import a data match file. A user enters the data match file name, then clicks submit. The system must have a mechanism in place to validate match information and save in the student records database. |

Report Manager

The report manager provides access to the data system's reports. It consists of a menu of available reports with links to each one. Each report, including mandatory NRS tables and WIOA reports can be run for particular date ranges, student demographics (age, ethnicity, gender), type of program (ABE, ESL), outcomes, and program location. The system will be capable of generating the NRS table referred to in Appendix A.

System Setup Manager

The system setup manager provides functions for setting up the system for programs to use. It includes a page for adding:

| Basic Instructor Information | Name, identification number, contact information |

| Program Information | Program Name, Identifier, Location |

| Class Information | Class Name, Program Type, Identifier, Teacher identification number, Hours |

| Assessments | Assessment Name, Forms, Score Ranges, Mapping to EFL |

Inputs

To provide the system functions described above, and generate required NRS and other outputs, the system must be able to manage certain types of information. The required data items for a WIOA data system are described in the NRS TA Guide, https://nrsweb.org/policy-data/nrs-ta-guide

Business and Validation Rules

The system will be capable of validating data when entered by an individual user, or imported from another source, such as data matching. The following is a list of data checks and validation rules to be implemented.

| Ethnicity Values | The system will allow entry only of standard NRS ethnicity codes |

| Dates of birth | The student's date of birth will be entered and stored in the format YYYY-MM-DD. The system will assure proper formatting, reasonable ranges, and appropriate student ages. Students must be 16 year of age or older. |

| Enrollment | The format of enrollment date will be validated. Date of enrollment cannot be before intake date. |

| Assessment | Assessment scores must be within range for the test and form administered. Students must be pretested before enrollment in a class. Date of postest cannot be less than the number of days set by state policy since last assessment. Assessments and forms must be appropriate for program type. Student score changes of greater than 10% will be flagged for potential entry errors and reported. |

| Attendance | Attendance hours for a student may not exceed contact hours for the class during the same period. Missing data will be reported if no attendance entries for over 45 days. |

| Separation | Students are separated from a program 90 days after their last service was provided. |

Counting Rules and Reporting Requirements

NRS reporting under WIOA requires an understanding of data items that must be collected and maintained by your state data system, rules for determining whether and how data are maintained, as well as specific reporting criteria and formulas.

This list of business rules specifies the types of data that your system must maintain about programs, staff and participants and conditions that govern how and when they may be entered. In addition to these federal business rules, each state may develop additional rules for entering and maintaining intake, enrollment, attendance, advancement, and outcome data for local programs. To assure accuracy and consistency of student records and program data, it is advisable to implement data checks within your data system based upon federal and state business rules.

NRS/WIOA Business Rules

|

Data Element |

Business Rules |

|---|---|

|

Individual age |

Individuals must be no younger than 16 at Intake |

|

Individual ethnicity |

The ethnicity of individuals may be reported as one of the following:

|

|

Individual Participation Status |

Participation status is recorded as individuals progress through a program, as follows:

|

|

Individual Life Circumstances |

One of the following life circumstances may be designated for individuals at any time during their participation:

|

|

Individual Employment Status |

One of the following Employment statuses are recorded at intake:

|

|

Education Level |

One of the following Education statuses are recorded at intake:

|

|

Individual income |

Income for job individual acquired while participating in program, or after exit. |

|

Individual period of participation Dates |

Program entry and exit dates for individuals are recorded as follows:

* Final contact before laps of 90+ days |

|

Program Types |

Participants may be enrolled in only one of the following programs during a period of participation:

|

|

Program Content |

Students may be enrolled in programs may be funded to provide assistance in one or more of the following areas:

|

|

Instructional Environment |

Participants may be enrolled in a programs operating in one of the following environments:

|

|

Assessment |

Assessment Results must be entered when student enters a program and when individual is retested – consistent with state policy or guidelines – for those in the following participation statuses:

|

|

EFL

|

Individuals who have been tested are assigned a single Educational Functioning Level (EFL) based upon their assessed score, or Carnegie Units completed. A student's EFL is assigned an EFL each time he is assessed, or completes an appropriate number of Carnegie Units to advance.

|

|

Enrollment |

Only Participants, and Participant/Inmates may be enrolled, as follows:

|

|

Contact Hours |

Contact hours are entered only for Reportable Individuals, and Participants:

|

|

Measurable Skill Gains |

Measurable Skill Gains (MSGs) for participants may be entered when:

Multiple gains may be entered |

|

Outcomes: Employment |

Employment Outcomes for participants may be entered when:

Employment outcome must be verified/entered as of: Second quarter past exit Fourth quarter past exit Multiple employment outcomes may be entered |

|

Outcomes: Credential |

Credential outcomes may be entered for participants as follows: Secondary Credential

Postsecondary Credential

|

|

Outcomes: Family Literacy |

The following outcomes may be recorded for participants in family literacy programs:

|

|

Staff Roles |

Staff members for programs administered under AEFLA are characterized by one of the following job functions:

|

|

Staff Organizational Level |

Staff members for programs administered under AEFLA are designated by one of the following organizational levels:

|

|

Staff employment status |

Staff members for programs administered under AEFLA are designated by one of the following employment statuses:

|

|

Paid Staff Experience and Credentials |

Experience and Credentials for Paid Staff members for programs administered under AEFLA are maintained:

|

Final Thoughts

The sample specification, described in these tabs, provides both a sample and building block upon which you can create your own system description for an RFP or to share with in-house developers. It covers system elements that are commonly included in NRS data systems albeit by different names at times.

As a foundation for additional work, it is designed to help you get started quickly and help shorten the effort to create your own NRS data system specification.